Something broke in marketing this year, and the numbers prove it. Brands are generating more content than ever, driven by AI tools that promise efficiency and scale. But consumers aren’t engaging with it.

Half of the surveyed consumers can now correctly identify AI-generated content, and when they do, their behavior shifts dramatically. About 52% report reduced engagement with content they believe is AI-generated. This isn’t a minor preference. This is a trust problem becoming a revenue problem.

When Disclosure Backfires

The transparency paradox reveals itself in stark numbers. AI-generated ads with notice disclosures experience a 73% increase in ad trustworthiness and a 96% increase in overall trust for the company. That sounds promising until you examine the baseline. Only 38% of consumers share a positive sentiment toward AI, compared to 77% of advertisers who view AI in a positive light.

There’s a massive perception gap between marketers and their audiences. Advertisers see efficiency gains. Consumers see something else entirely. Even technically polished AI content faces a “trust penalty” where consumers react warily when they sense a message was created by a machine.

Labeling content as AI-generated satisfies compliance requirements, but it doesn’t address the underlying concern. When you tell someone a machine wrote your message, you’re admitting there’s no human connection. Honesty doesn’t necessarily make people trust you more.

The Search Engine Reality Check

Google settled the debate about AI content quality with action, not policy statements. AI-generated content without human oversight typically ranks 40% lower in E-E-A-T signals.

Consumer perception mirrors search algorithms. AI-generated creative is consistently assessed as more “annoying,” “boring,” and “confusing” than ads made through traditional methods.

According to research from NielsenIQ, even high-quality AI ads fail to create lasting impressions compared to human-created content.

The pattern appears across channels. About 62% of consumers are less likely to engage or trust content on social media if they know it was generated using AI. Also, 26% find AI-generated website copy impersonal, while 20% deem AI-generated social media posts untrustworthy.

Why Emotional Content Fails

When AI attempts to establish an emotional connection, consumer reaction intensifies. AI-generated emotional communications elicit moral disgust, reduce positive word of mouth, and diminish brand loyalty. This response comes from a fundamental expectation: emotional content should originate from emotional beings.

Research published in the Journal of Business Research demonstrates this “AI-authorship effect” across multiple studies. When consumers believe emotional content has been generated by AI, they experience disgust that affects their relationship with the brand. Consumers are calling for increased regulation of generative AI in marketing, with concerns about assessing authenticity as the foremost reason.

Authenticity matters more than ever.

The Human Oversight Multiplier

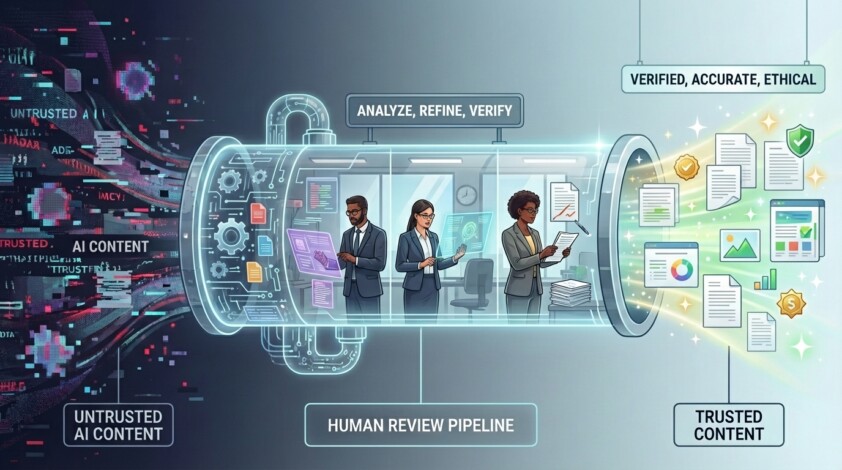

The successful approach combines AI capability with human judgment through structured workflows. AI content that includes human strategic oversight performs 4.1x better than fully automated output. Not marginally better. Four times better.

A study reveals that 73% of marketers use a hybrid approach, with human editors polishing AI drafts. Among successful implementations, subject matter experts fact-check and refine each AI draft before publishing, allowing AI content to perform as well as human-generated content in rankings and traffic.

SmythOS enables this approach through agent orchestration. The platform supports language chains, where one model refines another’s output; agent chains, where one agent evaluates another’s work; and human-in-the-loop patterns, where people verify outputs at critical stages.

Components operate independently with air-gapped data flows, preventing cross-contamination while maintaining workflow coherence.

Building Verification Into Production

The failure rate tells the real story. Companies are automating content production without automating quality control.

SmythOS addresses this gap through workflow components designed for content verification. The platform features grounding capabilities that enhance AI reasoning by integrating data from RAG systems, APIs, and verified databases. Your content agents check facts against your knowledge base in real-time rather than relying solely on training data.

The architecture supports tree-of-thought processes where multiple models approach content from different perspectives, and reasoner chains that perform deep analysis before evaluation. These aren’t theoretical capabilities. They’re production patterns are built into the runtime environment.

Beyond the Pilot-to-Production Gap

Most AI content initiatives fail because companies treat AI as a replacement rather than an amplification system. They scale output without scaling oversight. SmythOS’s approach treats agents as orchestrated systems where specialized agents handle specific aspects of content quality, similar to how human content teams structure roles across research, writing, editing, and fact-checking.

The platform offers built-in observability, including execution traces for every component, real-time logs, and performance metrics. You can see exactly what each agent did, why they made specific choices, and how content evolved through your workflow. This transparency matters for quality control and accountability.

The path forward requires infrastructure that maintains authenticity at scale.

Star SmythOS on GitHub to follow the development of the SmythOS Runtime Environment.